Enterprise enthusiasm for AI has never been higher. Boards are asking about it. Leadership teams are commissioning pilots. Technology vendors are repositioning every product around it. And yet the gap between AI ambition and AI deployment at scale remains stubbornly wide.

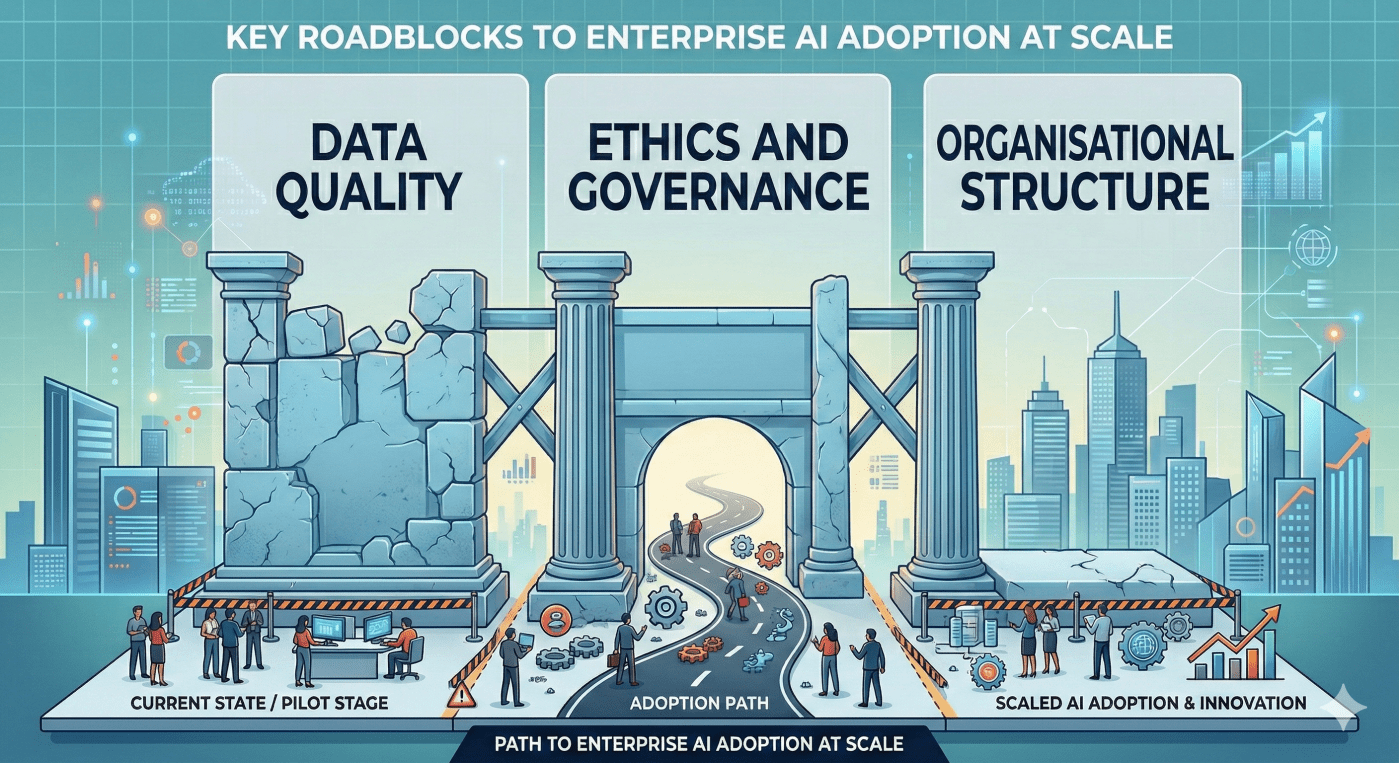

The constraint isn’t a shortage of capable technology. It’s three structural barriers that most enterprises haven’t yet resolved — and that no model, however capable, can resolve on its own.

Why Enthusiasm Doesn’t Become Adoption

The pilot-to-production problem in enterprise AI is well-documented at this point. A significant proportion of AI models that enterprises develop never make it into production deployment — not because they don’t work technically, but because the organisational conditions for deploying them at scale don’t exist.

This connects directly to the observation made in my earlier post on enterprise AI readiness: the challenge was never the technology. The companies furthest ahead in AI deployment are those that solved the organisational prerequisites first. The three barriers standing between most enterprises and that outcome are distinct, interconnected, and worth understanding separately before addressing them together.

Barrier One: Data Governance

The first and most foundational barrier is the one that receives the least glamorous attention: data governance. As explored in depth in the earlier post on why data governance deserves executive attention, the AI readiness problem and the data governance problem are essentially the same problem viewed from different angles.

Most enterprises cannot confidently answer basic questions about their data: Who owns it? How was it collected? Is it current? Is it consistent across systems? What biases might it carry? These aren’t abstract concerns — they determine whether a model trained on enterprise data produces outputs that can be trusted in production.

The specific data challenges that tend to block enterprise AI deployment include:

- Inconsistent definitions — the same business concept defined differently across systems, making training data structurally unreliable

- Siloed ownership — data held in departmental silos with no cross-functional governance, making it impossible to assemble the comprehensive datasets that production AI systems require

- Quality degradation over time — data that was accurate at collection becoming stale, incomplete, or inconsistent without systematic quality management processes in place

- Lineage gaps — no traceable record of where data came from, how it was transformed, and what assumptions were embedded in that transformation

Enterprises that haven’t addressed these foundations discover them at the worst possible moment — when a production AI deployment produces outputs that nobody can explain or defend.

Barrier Two: Ethics and Responsible AI Governance

The second barrier is one that has grown significantly in visibility alongside the rapid capability advances of generative AI: the ethical and governance dimensions of AI deployment.

The concerns are legitimate and well-founded. Algorithmic bias — where models produce systematically different outcomes for different demographic groups — has been documented across hiring, lending, healthcare diagnostics, and criminal justice applications. Transparency failures — where AI systems make consequential decisions that neither users nor operators can explain — create accountability gaps that expose organisations to regulatory, legal, and reputational risk. Fairness questions — about who benefits from AI systems and who bears their costs — are becoming part of procurement and regulatory conversations across multiple industries.

What’s worth naming alongside the legitimacy of these concerns is the paralysis they sometimes produce. Enterprises that acknowledge the ethical dimensions of AI without establishing a practical governance framework for navigating them end up neither deploying AI responsibly nor deploying it at all. The absence of a framework becomes its own form of governance failure.

The responsible AI governance structures worth building include: explicit bias testing as part of model validation before any production deployment; documented model cards that capture what a model was trained on, what it’s designed to do, and what its known limitations are; human oversight requirements for high-stakes decisions; and clear accountability chains that specify who is responsible when an AI system produces a harmful or incorrect output.

These aren’t compliance gestures — they’re the foundations that make AI deployment defensible to the regulators, customers, and boards that are increasingly asking about them.

Barrier Three: Organisational Structure

The third barrier is structural and, in many ways, the hardest to fix because it requires changing how people work rather than how systems are built.

AI capability in most enterprises lives in isolated pockets — dedicated data science teams, innovation labs, or centres of excellence — that are structurally disconnected from the business operations they’re meant to serve. When an AI team develops a model that genuinely works, deploying it into production requires crossing organisational boundaries that weren’t designed to be crossed: integrating with existing systems owned by different teams, navigating IT governance processes built for different kinds of software, securing business unit adoption from teams that had no involvement in building the solution, and resolving accountability questions that the organisational chart doesn’t cleanly answer.

The result is a structural drag between AI development and AI deployment that no amount of technical capability eliminates. Models get built and then sit — not because they don’t work, but because the organisational path to production doesn’t exist.

The structural changes that address this pattern consistently involve bringing AI capability closer to where business decisions are made: embedding data scientists in product and operations teams rather than centralising them in separate functions; creating clear accountabilities for AI deployment that span the technical and business sides; and establishing governance processes for AI deployment that are streamlined enough to actually be used rather than circumvented.

Why These Three Barriers Are Connected

The critical insight about these three barriers is that they compound each other. Weak data governance undermines the reliability of models, which amplifies ethical concerns about bias and accuracy, which increases organisational resistance to deployment, which weakens the case for investing in data governance. The cycle is self-reinforcing.

This is why addressing them sequentially — fixing data governance first, then building ethics frameworks, then solving organisational structure — tends to produce better outcomes than attempting to address all three simultaneously or, more commonly, hoping that the technology will somehow resolve problems that are fundamentally organisational in nature.

The enterprises making the most progress on AI adoption are those that have recognised the interdependency and are working on the foundations deliberately — not as prerequisites to be completed before AI work begins, but as parallel investments that make each AI deployment better than the last.

What This Means for Founders Building Enterprise AI

For founders building products in the enterprise AI space, these three barriers are not just problems customers face — they are the product opportunity. The enterprises most willing to invest in enterprise AI solutions right now are those feeling the specific pain of one or more of these barriers acutely.

Data governance tooling, responsible AI frameworks, and organisational enablement for AI deployment are all areas where enterprise willingness to pay is directly correlated with the severity of the barrier being felt. The founders building products that address a specific, well-understood barrier — and can demonstrate measurable progress against it — are in a fundamentally stronger commercial position than those selling general AI capability into an environment where the blockers haven’t been named.

Which of these three barriers is most acute in the organisations you’re working with or building for — and where is the most progress being made? The technology is ready. The question is whether the foundations are. Let’s keep learning — together.

Share your thoughts